We discussed in a previous post about how critical it is for card and board game designers to make efficient use of the space that they have since, unlike digital games, a) the UI is static and b) you can’t patch it. In a video game, the minimap might only be displayed when you’re in the navigation screen and hidden otherwise, since that information is not needed. Analog designers do not have this luxury; a minimap is either printed on the map or it’s not. There are often clever design techniques to account for this (Imperial Struggle presents off-board cards to represent transient wars, for example), but these techniques reduce, not eliminate, the need for intelligent use of space.

GMT’s Imperial Struggle (pic from BoardGameGeek)

One way to use more space than needed (which, as we’ll see, might not be wasteful if done on purpose!) is to encode the same information more than once. On a magic card, imagine printing the power and toughness of a creature in all four corners instead of just one. This is obviously wasteful, or at least unnecessarily cluttered.

Experienced game players and designers can intuit this, but we would like to be able to measure it; knowing you’re using space inefficiently is not the same as knowing how inefficiently and where.

Orthogonality

In information theory, we use the term orthogonal to mean “perfectly unrelated.” This is an algebra term that refers to vectors that cannot be used to describe one another and share nothing in common. Imagine two intersecting streets, one traveling perfectly north-south, the other traveling perfectly east-west. Moving any amount along one does not move you at all as though you had moved along the other.

Contrarily, imagine two intersecting roads, one traveling perfectly east-west and another, just as straight, traveling slightly northeast-southwest. If you move some amount along the road to the northeast, you will have moved a certain amount east as though you’d traveled on the east-west road. Not the same distance, but some distance. These roads share common travel. If the second road ran at a 45 degree angle to the first, it would share half of its travel with the east-west road.

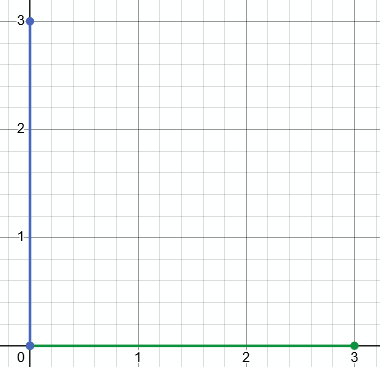

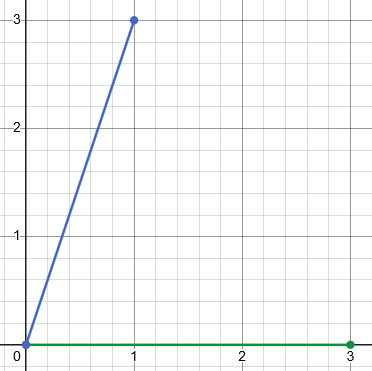

There are direct applications of this kind of linear algebra to this problem (as we’ll see, but pass by), but we’re going to use information theory. In the charts above, we can use simple algebra to determine how much information these vectors share. It’s not always easy (or efficient) to represent systems as vectors, so we’ll represent a similar concept with information theory. In this case, we’re going to use conditional entropy.

Entropy Again

We talked previously about what information entropy is: it is the amount of information encoded in a random variable, sometimes described as the amount of surprise in a variable. There are a ton of variants and follow-on representations you can derive from the concept of entropy, and one of them is conditional entropy.

Conditional entropy is the amount of information encoded in a variable given the value of another variable. If someone tells you they were in a car crash, you would be very surprised; being in a car crash is unusual. If someone told you they were in a car crash and you knew that they were a professional stunt driver, you would be less surprised. The same value of a variable represents more or less information depending on knowledge of other variables.

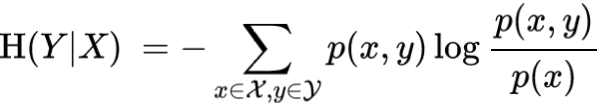

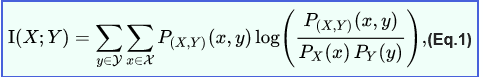

Mathematically, conditional entropy (and feel free to skip this part if you don’t care) looks a lot like the definition for entropy, but with joint probabilities and with a joint probability divided by a given probability inside the logarithm (also note the double summation over two variables).

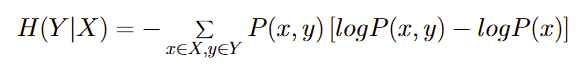

One of the reasons I really like entropy mathematically (as opposed to probability or linear algebra) is because of how intuitive it can be. That equation up there might look scary or arbitrary, but if you expand the logarithm, you get this:

This is clearer because of the value inside the square brackets; it’s an expected value of the information of the joint probability MINUS the information of the marginal probability. In other words, how much information is stored in these two variables together that isn’t stored in one? You’ll see what I mean in the first example.

Conditional Entropy is the amount of information needed to describe the outcome of a random variable given the outcome of another.

This is relevant to game designers because we can measure, objectively, how much information we’re representing in multiple places on a card or board and are wasting space thereby. In the case of printing the power/toughness of a Magic creature in quadruplicate, we can intuitively say that these four representations all encode the same information and we can point to where and identify what information. Sometimes it’s not that obvious or that discrete.

We’ll look at two examples to understand the concept and the application. First, we’ll look at Cardweaver, a deckbuilding game with great mechanics and an atrocious rulebook to see an easy application and calculation to build intuition, then we’ll look at Pax Pamir to see how non-orthogonality might creep into a game without the designer knowing AND why you might tolerate or even induce inefficient representation.

I’ll say this again later, but to get ahead of this: perfectly orthogonal iconography is a fine goal, but it’s a better tool than a goal; we’ll see how optimizing for perfectly orthogonal design might end up with a worse design for one reason or another. Just as a skilled poet is allowed to break the rules of meter, a skilled designer can break the rules of optimization, but only because they understand the rule they’re breaking.

Examples

Cardweaver

Cardweaver is a super fun asymmetric us-vs-them deckbuilder. Please look online for tutorials because the rulebook is not great.

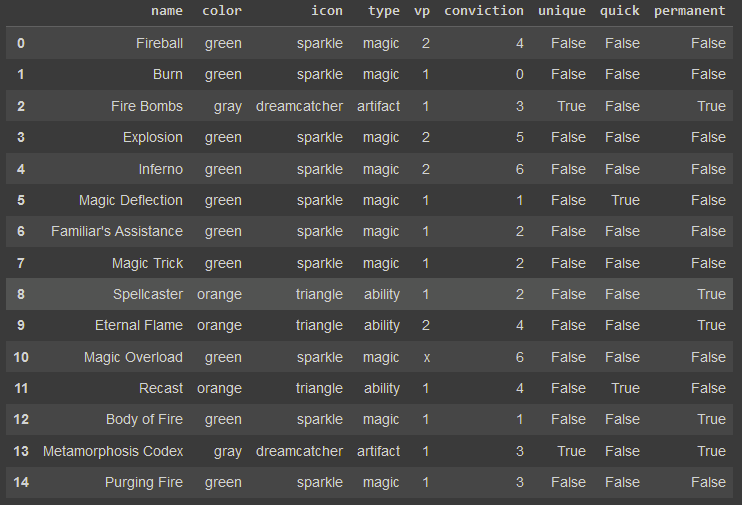

Each card has some visual design elements: color, an icon in the upper left, the card name in the top center, a type in the center, a picture in the top, text on the bottom, a conviction cost in the lower right, and victory points in the bottom center. There are also optional icons on the left side of the card depicting how fast the card can be played, whether it stays in play, or is unique.

Let’s look at the cards in the Pyromancer’s deck:

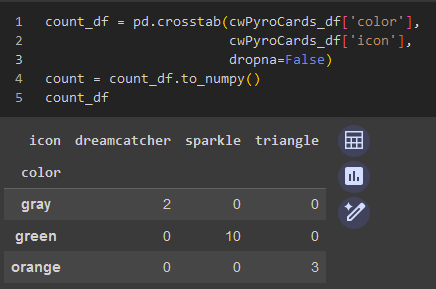

One thing that will jump out to veteran players and designers is that there are three visual elements that encode the same information: the color, the icon, and the type. A card with an orange color is always an ability and always has a triangle icon, for example. Let’s measure that, starting by counting the co-occurrences of each.

Linear algebra enjoyers will immediately recognize the eigenvalue decomposition opportunity here; that’s super cool stuff and we’ll get to it one day, but we’ll pass it by for now.

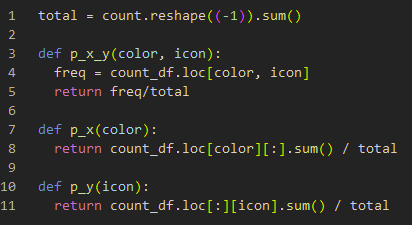

Since we know how many cards there are and how many occurrences of each pair there are (and can calculate the marginal occurrences by summing by row or column), we can use that to estimate probabilities (Bayesians in shambles, crying in the corner, clutching their credible intervals). We need the joint probability P(x,y) and the associated marginals, P(x) and P(y) for each possible x and y.

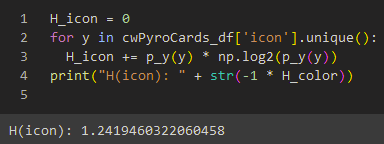

Let’s look at how much entropy is represented by the icon of a card:

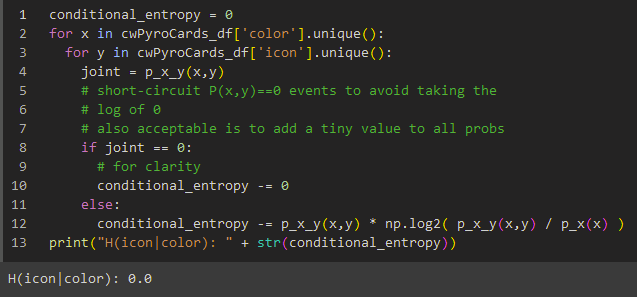

The icon stores 1.24 Shannon bits of entropy. Now let’s compute the conditional entropy of the icon given the color: if we know the color, how much entropy is left for the icon to capture?

Zero. If we know the color, there is no additional entropy left for the icon to represent. The math lines up with our intuition; the icon and the color encode exactly the same information.

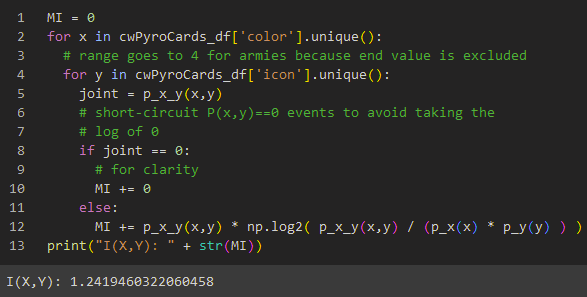

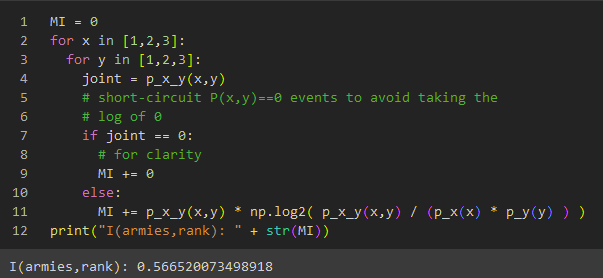

We can check to see how much information these variables share by computing their mutual information: how much information do these variables both represent?

Mutual information is calculated with the following equation, which, again, looks quite similar to the conditional entropy equation.

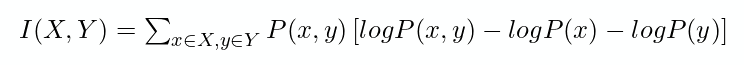

As before, expanding the log helps us understand this intuitively:

Inside the brackets again: if you start with the information of the combination of these two variables and subtract each individual variable out, what are you left with? If these two variables overlap, you’ll double-subtract that amount of information, so negating that (note how this equation doesn’t have a negative sign in front of it like the other equations) tells you how much information they share. If they’re perfectly orthogonal, you don’t double-subtract anything, so you’ll be left with 0 information. Let’s see what the mutual information is between Color and Icon here:

As expected, the amount of information shared between these two variables is the amount of information each variable contains: 1.24 bits. Even if we didn’t immediately notice the redundant iconography, the math would have told us. ALSO NOTE that this is an asymmetric function; H(X|Y) is not necessarily the same as H(Y|X). H(from California | from USA) > 0, but H(from USA | from California ) = 0, since once someone tells you that they’re from California (a state in the USA), they don’t add any information by telling you they’re from the USA; the reverse is not true. The measure of mutual information, however, is symmetric: I(from California, from USA) = I(from USA, from California), since they both share the same information.

Pax Pamir 2E

The iconography that inspired this examination is Pax Pamir Second Edition, one of my favorites. I suspected that cards that add an army always add a number of armies equal to their rank; a rank 1 card that adds armies will always add 1 army, etc. I was wrong; I found one, and then a few, counterexamples. With a few counterexamples, but not many, it seemed like some information is shared between Rank and Army count, but not all of it. Unlike the Cardweaver example, intuition here is insufficient because the specifics aren’t as simple; we need the math.

Recall the makeup of a Pax Pamir Court card:

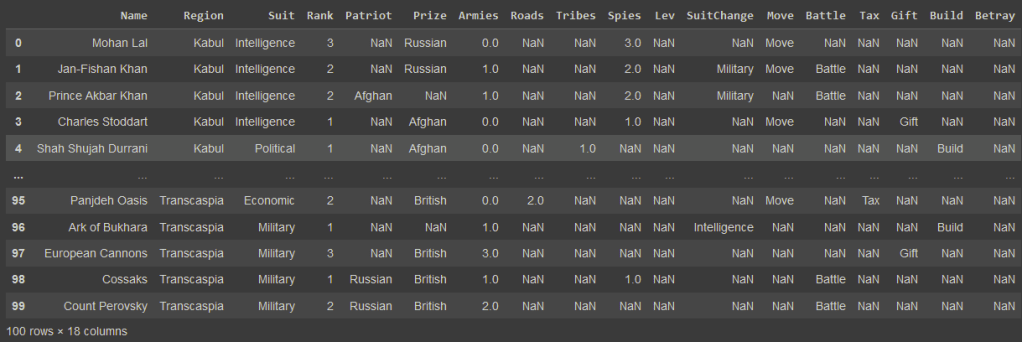

If we winnow out the cards that do not add armies, we end up with this count:

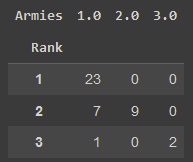

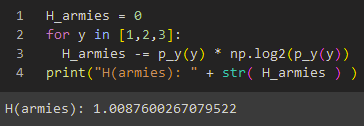

Again, using these counts as a basis for estimating probability, we can calculate the entropy in the set of Army values:

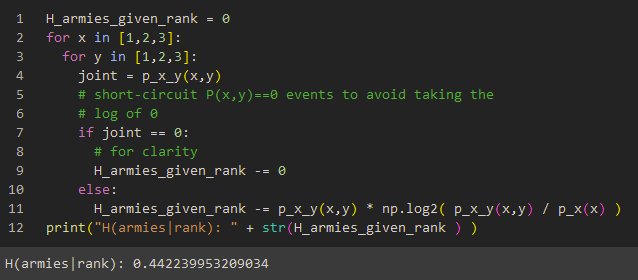

AND we can calculate the entropy left for the Army value to capture if we know the card’s Rank:

Since we’re only computing over two variables, the difference is the mutual information:

The mutual information, the amount of information encoded in both the Army value and the Rank value, is over half of the information encoded in the Army variable!

What does this mean from a design perspective? Well, it means we can “normalize out” this information if we want. Instead of including icons or any card representation for the army count at all, we could instead replace it with a single icon that represents adding armies in general and include rules in the rulebook that the icon means to add armies equal to rank. If we want to deviate from that, we could add text to the cards that do saying “Add an additional army.” We could do the same thing with patriots that add armies of a specific nationality: just write in the rulebook that patriots add armies of their nationality when they add armies.

The limited space on a board tempts the designer to lift rules from the board or card and store them in the rulebook where space is unlimited.

Here’s why orthogonality is best thought of as a tool rather than a goal: the inefficient representation we find in Pax Pamir is much more intuitive, has fewer (zero) exceptions or special cases, and requires less reliance on the rulebook than the perfectly orthogonal solution we just described. Denormalizing this information and presenting it to the player takes up space inefficiently, but that’s not a design flaw, it’s a cost paid to reduce the cognitive load on the player who gets a game whose rulebook doesn’t need to be consulted during play.

The limited space on a board tempts the designer to lift rules from the board or card and store them in the rulebook where space is unlimited. That’s fine as long as the designer has a good, perhaps quantitative, grasp of the tradeoff. An inefficient use of space buys a more efficient use of in-game time, as the mechanics are mostly in front of the player.

Conclusion

As before, these kinds of metrics are tools in the toolbox of a game designer to understand their game rather than an objective function to be maximized or minimized per se; it’s easy to see how slightly less shared information or increased orthogonality between representations could make the design of Cardweaver better but it’s also easy to see how it could also make Pax Pamir worse. But then again, it’s up to the designer to decide what user experience they want, since it is a tradeoff. In a detailed wargame like Here I Stand, the designer has much more license to load the rulebook down with content simply because the content relative to the board size is so huge. With an aim for elegance and parsimony of mechanics, the challenge becomes much greater and the judgment call much harder as to what information gets what space. Consider using conditional entropy and mutual information in your game designs to get a quantitative sense of what qualitative experience you’re designing for.